VeeamON: Getting hands-on with DataLabs

The big new product announcement at VeeamOn this year is Veeam DataLabs, an extension of the company's Virtual Lab offering.

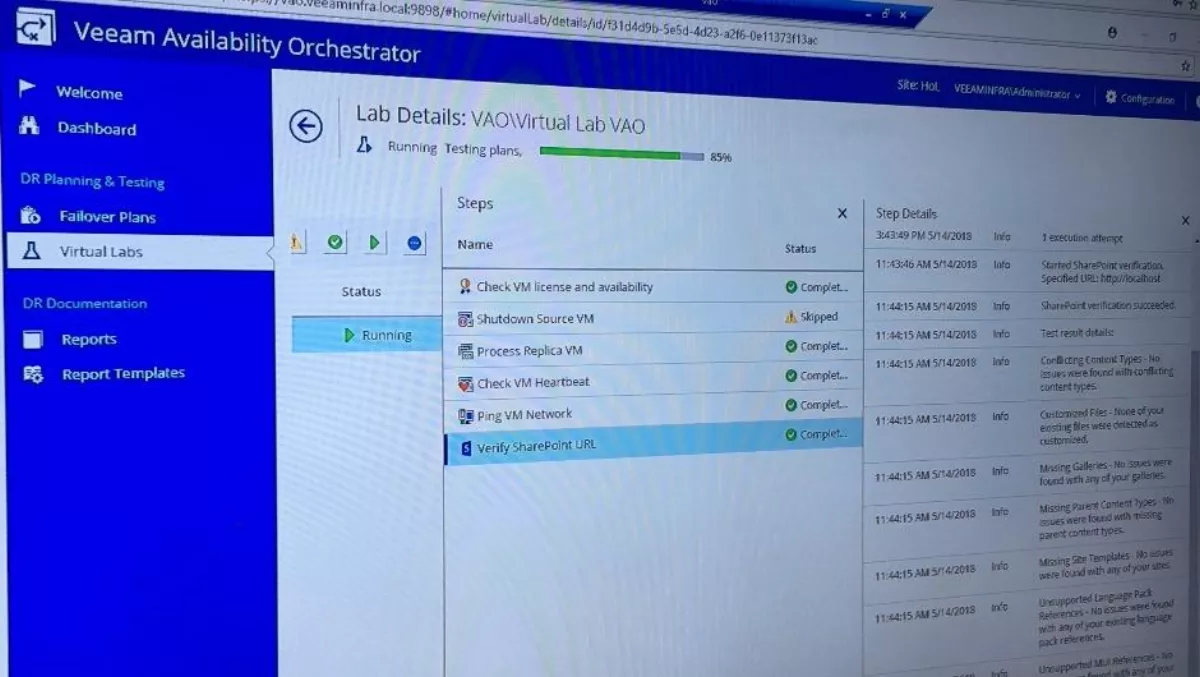

Veeam software engineer Marty Curlett gave me a real-time demonstration of DataLabs in operation, explaining exactly how it can save IT professionals, and the organisations they work for, time and money while mitigating the risks that are often associated with testing and DevOps.

Curlett quickly sets up a virtual lab that is an exact replica of the production environment and provides all of the same feedback from any input without affecting any production.

"We are building up a virtual lab appliance," Curlett explains as he walks me through the setup steps.

"It's nothing more than a dumb router that does not allow any traffic out but allows traffic in. It's just a NAT (network address translation) device. Inside the VAO (Veeam Availability Orchestrator) server, we have an embedded VNR (virtual network reconfiguration) server that just does the replication jobs for this environment.

Once that's done, no more than a few minutes of work, Curlett begins the process of setting up failover plan.

"The whole purpose of starting up a virtual lab is that we're gonna create a failover plan," he says.

"It's going to allow for us to test the functionality of that failover without ever impacting the production VMs (virtual machines). We will be simulating a replication disaster, so to speak, and we're going to make sure that everything works in that failover scenario.

Curlett navigates through a few windows, clicks a few boxes and enters some basic information including his email address - a required step for reasons that will become clear soon.

"In the past, failover plans have been kind of daunting for customers because they want to failover all of the production," he says.

"I've sat with a lot of customers over the years that have to go in on the weekend to test their failovers, giving up personal time. All of this stuff we're doing here is going to relieve them of the need to spend time because we can schedule tests as well as failovers themselves, which don't impact the productions.

And following these scheduled tests, a truly comprehensive PDF report is generated, which includes links to easily navigate through the document, every single step that the process undertook, and timestamps for when each of the steps occurred.

"You need an easy button to do a failover and you want to be able to give permissions for other people in your organisation, not just your VCDR (virtual cloud disaster recover) guy, to do these. You can give them to site admins, application admins," says Curlett.

However, you don't need to worry about not being able to see what they are doing thanks to that email address entered earlier.

"We send notifications out any time something is done to this failover plan. Whether it's been run, executed, tested or anything like that, we send notifications to you.

So, you've run your failover tests every day and can prove to insurers and auditors that you are up to spec through your reports, but what happens in the case of an actual disaster?

"Imagine a volcano disrupts your production environment," Curlett posits.

"This is all run off your secondary or disaster site, so if your production data center is down you can get your data back up in a hypersensitive way. We can even do a permanent failover to make sure that VM stays over there and it starts replicating back once the data center is back and online.

"What's changed in the last two years is the ability to automate these replication jobs. We take production data and as it's updated send it over to your disaster site at intervals of 15 minutes or greater. Once that's built, and we know we have a copied VM from one site to another, it is a matter of orchestrating these failovers.

Throughout this whole process, Curlett shows me how he can go in and out of the various components - heartbeat testing, ping testing, or whatever other processes that the user has set up - and granularly see how it is coming along and even a description of what it does.

"It really is about intelligent data management," he says, calling on VeeamON's defining phrase.

"We're not talking about data synergy anymore, we're talking about applications and containers and making sure that business continuity takes place from a business perspective instead of an IT perspective.

"We want to make sure that business is served and we are allowing them to continue their business. That really is the bottom line.